You use AI every day. ChatGPT, Claude, AI agents helping with research, decisions, content.

But you have zero idea what's happening under the hood.

Is the AI giving you real information or making shit up? Was it trained on biased data? Has it been compromised? Did the company secretly change how it behaves?

You don't know. You just trust.

Rebecca Simmonds from the Walrus Foundation puts it bluntly: "Most AI systems rely on data pipelines that nobody outside the organization can independently verify."

You're trusting. They're controlling. And you have zero way to check.

And that's a problem when AI is making decisions that affect your money, your health, your life.

What's Really Broken

Here's what you can't verify today:

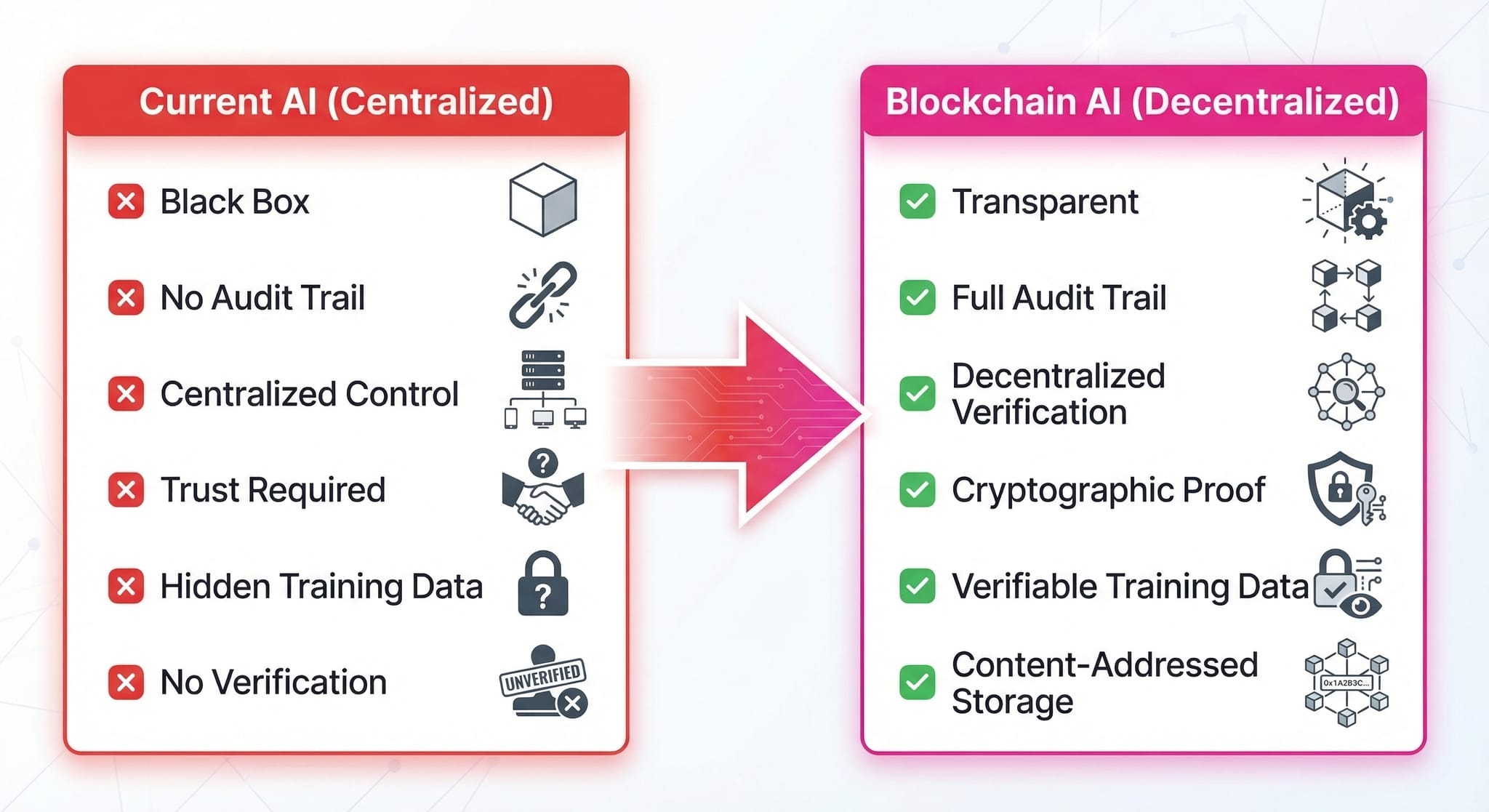

No audit trail. The AI gives you an answer. You can't see what information it actually used to generate that answer. It's a black box.

No verification of sources. Even when the AI cites sources, you can't prove it actually used them. Did it read that CoinDesk article and base the answer on it? Or did it generate the answer first, then search for something that looks like it supports it?

No transparency on training data. You have no idea if the AI was trained on clean data, propaganda, or manipulated information. You're trusting OpenAI/Anthropic/whoever built it.

No way to detect compromise. If an AI agent gets hacked or manipulated, how would you know? There's no record of what changed or when.

Zero accountability. The AI hallucinates, gives bad advice, spreads misinformation? Good luck auditing what went wrong. The reasoning process is invisible.

Simmonds frames it clearly: "It's about moving from 'trust us' to 'verify this,' and that shift matters most in financial, legal, and regulated environments where auditability isn't optional."

Right now, you trust AI because you have no other choice.

Why It Matters

Right now you're thinking: "Sure, but ChatGPT helps me write emails and brainstorm ideas. It's fine for that."

You're right. For low-stakes tasks, blind trust works.

But that's not where the problem lives.

The problem shows up when AI starts making decisions that actually matter.

For Example:

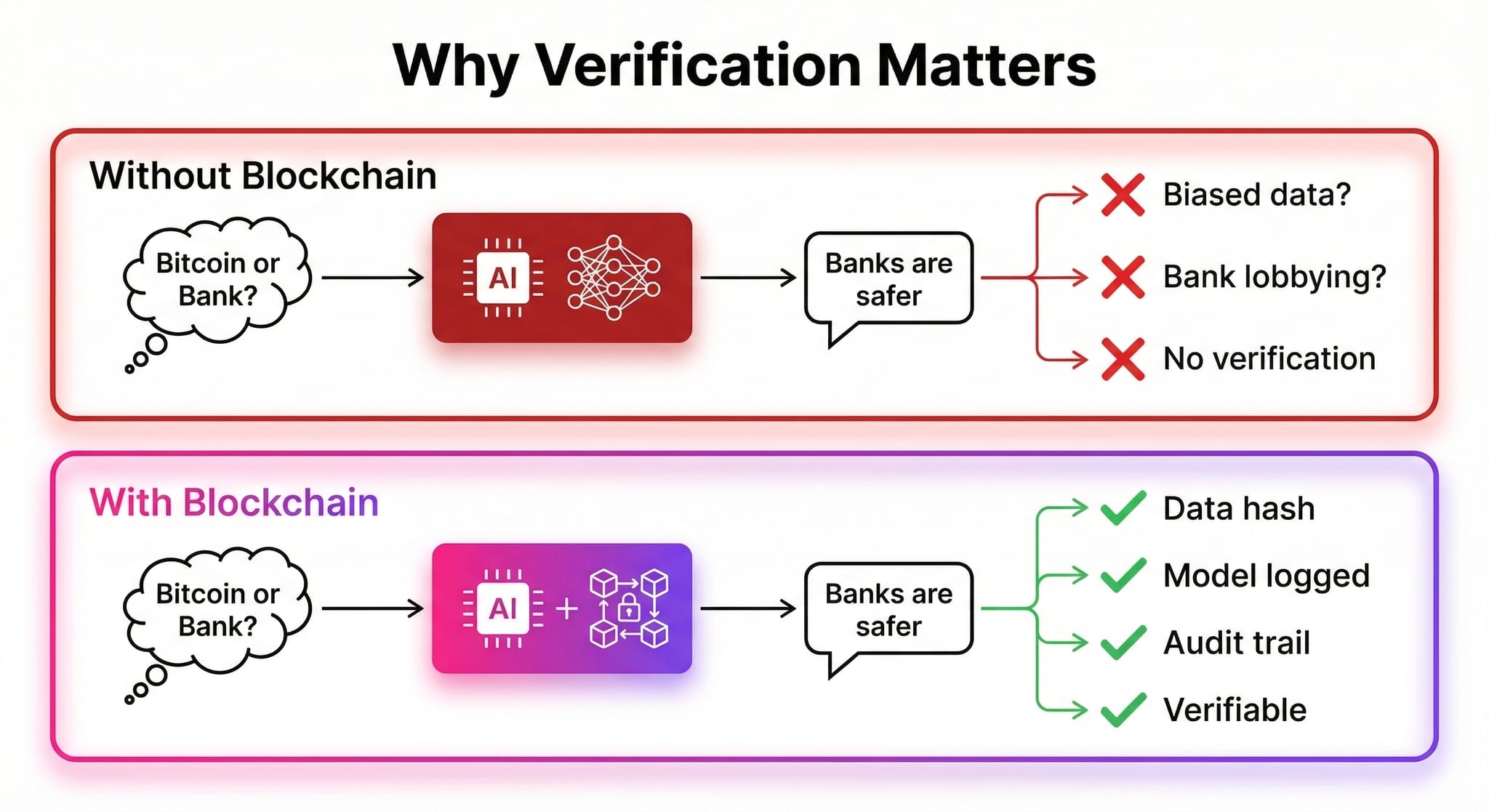

You ask an AI: "Should I invest in Bitcoin or keep my savings in a bank?"

The AI says: "Banks are safer. Bitcoin is too risky."

You have no idea:

• Was it trained on anti-Bitcoin content funded by banks?

• Did Jamie Dimon's team lobby the AI company to bias the model?

• Did the AI actually analyze current market data, or just repeat old narratives from 2022?

You can't verify. So you follow the advice, miss a 300% Bitcoin rally, and your savings get destroyed by inflation.

With blockchain verification: You could audit what data the AI used, see if the training data was biased, and verify the reasoning. You'd know if you were getting real analysis or institutional propaganda.

The finance industry knows this is a problem. As Walrus Foundation notes, "small data errors can turn into real losses thanks to opaque data pipelines."

But here's the scarier part: Davide Crapis, Ethereum Foundation's AI lead, warns that "we will probably see hacks orchestrated by AI" where "the old security models break when AI can impersonate a human."

AI-powered scams are coming. Without cryptographic verification, you won't know if you're talking to a real AI or a compromised one.

The pattern now:

Low stakes = blind trust is fine.

High stakes (money, health, major decisions) = blind trust is dangerous.

And AI is moving into high-stakes territory fast. Financial advisors, medical diagnosis, legal advice, business strategy.

You need to verify, not trust.

That's why the trust layer matters.

The Solution: Blockchain as the Trust Layer

Ethereum (and other blockchains) can solve this by making AI verifiable instead of trusted.

On-chain audit trail. Every time an AI accesses data or makes a decision, it records what sources it used on the blockchain. You can verify: "This AI actually read these 15 articles before answering my question."

Cryptographic proof of sources. No more "did it really use that source?" The blockchain proves what information went into the AI's reasoning.

Verifiable training data. Store cryptographic hashes of training datasets on-chain. Anyone can audit: "This AI was trained on X data from Y sources." Can't secretly swap in biased content.

How it works technically:

Each dataset gets a unique ID derived from its contents. If the data changes by even a single byte, the ID changes. That makes it possible to verify that the data in a pipeline is exactly what it claims to be, hasn't been altered, and remains available.

Tamper detection. AI agents sign their outputs on-chain. If an AI changes behavior, the blockchain shows when and what changed. You can detect if it's been compromised.

Transparent updates. Model changes recorded on-chain. No secret censorship or bias injection. The community can see what was modified and why.

"Don't trust, verify" - but for AI.

You're not blindly trusting anymore. You can audit, verify, and hold AI systems accountable.

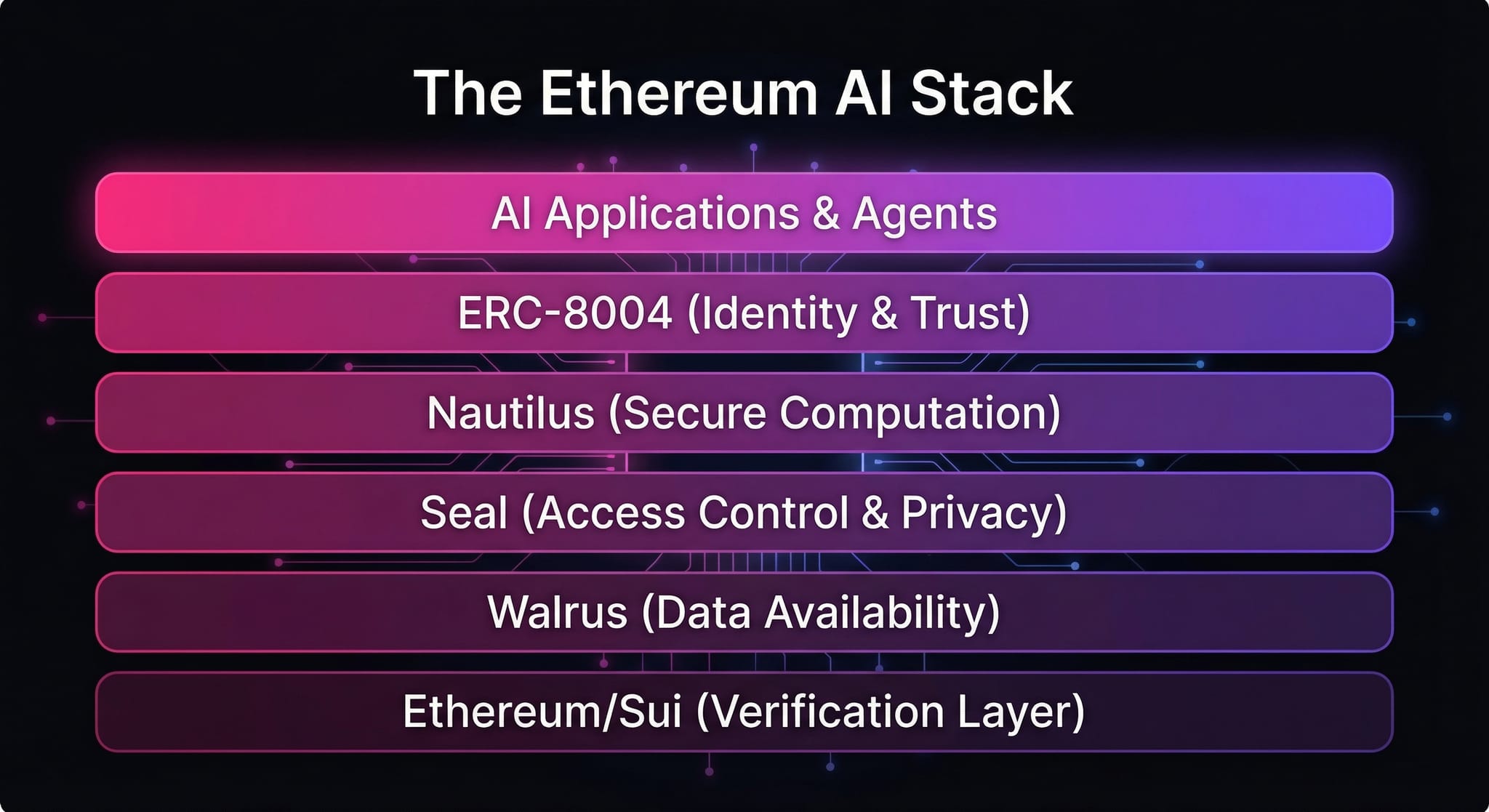

This isn't theoretical. Ethereum Foundation launched ERC-8004 in January 2026 - a standard for AI agent identity and trust that's going live on mainnet now.

Projects like elizaOS (open-source AI agent platform) and Zark Lab (blockchain-native AI intelligence) are already using blockchain-based verifiable data infrastructure.

The tech exists. The adoption is happening.

The infrastructure is live right now:

• ERC-8004 - AI agent identity, reputation, and validation registries on Ethereum

• Walrus - Decentralized data availability and provenance layer

• Nautilus - Secure off-chain AI workloads with on-chain proofs

• Seal - Access control and encryption for AI data

• Ethereum/Sui - Records policy events and receipts on-chain

Ethereum Foundation's Davide Crapis frames the stakes clearly: "If AI doesn't have the properties we care about — self-sovereignty, censorship resistance, privacy — and then we use AI for everything, basically no one has those properties anymore."

That's why Ethereum is positioning itself as the trust layer for AI. Not to compete with OpenAI or Google on model size, but to ensure that as AI becomes the interface to the internet, it doesn't quietly recentralize power.

You're already using AI every day. The question is: Do you want to trust it, or verify it?

References

1. Ethereum Foundation Wants the Network to Be the Trust Layer for AI - CoinDesk, March 4, 2026

2. Why Verifiable Data Is the Missing Layer in AI: Walrus - Decrypt, March 4, 2026

3. Ethereum's ERC-8004 Aims to Put Identity and Trust Behind AI Agents - CoinDesk, January 28, 2026

4. Walrus Protocol - Official Website

5. Ethereum Foundation AI Team - CoinDesk, September 15, 2025